The ops hire that onboards in 30 seconds.

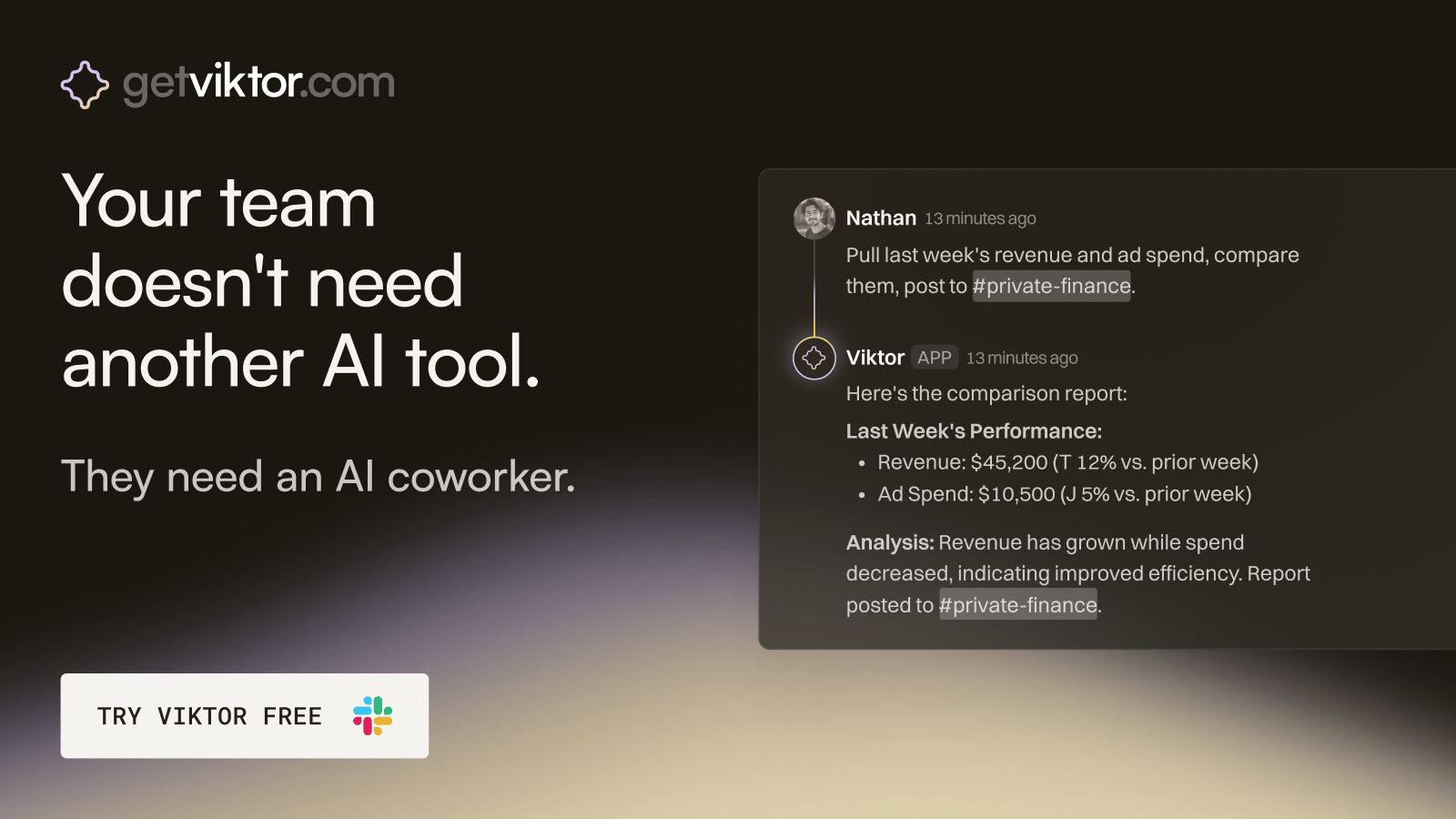

Viktor is an AI coworker that lives in Slack, right where your team already works.

Message Viktor like a teammate: "pull last quarter's revenue by channel," or "build a dashboard for our board meeting."

Viktor connects to your tools, does the work, and delivers the actual report, spreadsheet, or dashboard. Not a summary. The real thing.

There’s no new software to adopt and no one to train.

Most teams start with one task. Within a week, Viktor is handling half of their ops.

There is a joke that has been going around Silicon Valley for years.

You want a voice assistant to set a timer? Use Siri. You want it to actually understand you, answer a real question, or do something genuinely useful? Use literally anything else.

Apple knew this joke was about them. And for years, they kept telling their engineers to fix it internally. The answer always came back the same. It is going to take longer than we thought.

Then in January 2026, Apple did something that would have been genuinely unthinkable five years ago. They called Google.

What Actually Happened

On January 12, 2026, Apple and Google put out a joint statement confirming something that had been rumoured for months. The two companies have entered a multi-year partnership in which Google's Gemini AI models will power the next generation of Apple Foundation Models, including a completely reimagined version of Siri.

Apple is paying around $1 billion per year for the arrangement.

Let that number sink in for a moment. Apple, a company with nearly $4 trillion in market value and some of the smartest engineers on earth, decided it was faster and smarter to pay its biggest rival a billion dollars a year than to keep trying to build this in-house.

That tells you everything you need to know about how serious the AI race has become.

The new Siri powered by this partnership is set to debut with iOS 26.4, rolling out to iPhones in late March or April 2026. This is not a minor update. This is a complete reimagining of what Siri can do.

Why Apple Gave Up on Building This Itself

To understand why Apple did this, you need to understand what has been happening inside the company for the past two years.

Apple first teased a dramatically smarter Siri at its developer conference in 2024. The demos looked impressive. The reality did not match. Apple quietly delayed the launch and told the public it was going to take longer than expected. Behind the scenes, the company was scrambling.

The fundamental problem was not intelligence or engineering talent. Apple has plenty of both. The problem was data and time. Training the kind of large language model that powers ChatGPT or Gemini requires years of work and enormous amounts of computing resources. OpenAI had a multi-year head start. Google had been building toward this for even longer.

Before settling on Google, Apple also explored working with OpenAI and Anthropic. Talks with Anthropic reportedly fell apart because Anthropic wanted several billion dollars annually over multiple years. The OpenAI relationship was complicated by the fact that OpenAI was actively developing its own hardware and had poached key Apple talent including Jony Ive. Google ended up being the most practical choice for several reasons, including an existing legal and commercial relationship worth around $20 billion a year through the default search deal on iPhones.

What the New Siri Will Actually Do

This is the part that matters to most iPhone users.

The model powering the new Siri is internally known as Apple Foundation Models version 10. It uses a 1.2 trillion parameter Gemini model, which for context is among the largest and most capable AI models that exist anywhere in the world right now.

What that means in practice is a Siri that finally understands context the way a human assistant would.

The new version will be aware of what is on your screen at any moment. If you are reading an email about a dinner reservation and you ask Siri about the restaurant, it already knows what restaurant you mean without you having to explain. It will understand your personal context, meaning it can look at your messages, calendar, and emails to give you answers that are actually relevant to your specific life rather than generic responses.

You will be able to ask it things like "when is my mum's flight landing" and it will check your Messages app and give you the answer. You can say "remind me about my meeting prep" and it will find the relevant notes and documents without you having to specify which ones. It will be able to take actions inside apps on your behalf, not just open them.

For anyone who has spent years asking Siri something and getting a web search result in return, this is genuinely a different product.

The Privacy Question Everyone Is Asking

The most obvious concern is an obvious one. If Google's AI is powering Siri, is Google reading everything you ask?

Apple has been very deliberate in addressing this and the architecture of the deal is worth understanding.

The Gemini models are running on Apple's own Private Cloud Compute servers, not on Google's infrastructure. What this means in practice is that Apple has essentially taken Google's AI brain and housed it inside Apple's own privacy-controlled building. Google supplies the intelligence. Apple controls the walls, the security, and the rules about what leaves the room.

Apple CEO Tim Cook described it on the company's earnings call as a collaboration where Apple will continue to run on-device and in Private Cloud Compute while maintaining its industry-leading privacy standards. Simple requests are handled entirely on your device. More complex queries go to Apple's cloud. Only the most demanding tasks touch anything beyond that, and always through Apple's privacy infrastructure rather than Google's.

Whether you fully trust that arrangement depends on how much you trust Apple's privacy commitments generally. But the architecture is meaningfully different from simply sending everything to Google the way an Android phone might.

One detail worth noting: you will never see the word Gemini anywhere on your iPhone. The deal is structured so that Gemini's role is completely white-labelled. From your perspective it is still Siri, just a dramatically smarter version.

What This Means for Google

For Google, this deal is arguably bigger news than it is for Apple.

Google already pays Apple around $20 billion a year to be the default search engine on iPhones. Now its AI model will be running inside the device too, powering the personal assistant that touches almost everything a user does.

The partnership instantly makes Gemini the default AI engine across both Android and iOS simultaneously. That is access to billions of mobile users in a single deal, which is why the announcement pushed Google's market cap past $4 trillion for the first time.

It is also a significant statement about where Google stands in the AI race. Apple evaluated OpenAI, Anthropic, and Google side by side before making this decision. Choosing Google's technology above all others is a validation that carries enormous weight, both commercially and in terms of perception among enterprises that are deciding which AI platforms to build on.

What This Means for Everyone Else

OpenAI has the most to lose here. Apple and OpenAI already had a partnership that allowed users to access ChatGPT through Siri for complicated queries. That arrangement technically still exists, but with Gemini now at the centre of Apple's AI strategy, ChatGPT has been pushed to a secondary position. Apple told journalists it is not making any changes to the OpenAI agreement, but being the backup option behind a 1.2 trillion parameter Gemini model is a different position than being the primary partner.

For Anthropic, the story is even more direct. Apple's talks with Anthropic fell apart over price. Being excluded from what may be the largest AI integration deal in history is a significant miss.

For everyone building AI products and tools, the broader message is clear. The AI race is no longer just about which model scores highest on benchmarks. It is about which model gets embedded into the platforms that billions of people use every single day without thinking about it. Apple just made Google's answer to that question very powerful.

The Bigger Story Nobody Is Saying Out Loud

Here is what makes this moment genuinely historic.

Apple and Google have been competitors and occasional frenemies for fifteen years. The fight over default search, the antitrust battles, the ecosystem rivalries. These are two companies that have spent enormous energy trying to outmanoeuvre each other across almost every product category.

And yet when Apple needed the best AI in the world to save Siri, they went to Google. Not because they had no choice, but because after testing every option available, Google built the best thing.

That is not a story about defeat. It is a story about how serious this technology has become. When the pressure is real enough, the competitive instinct gives way to the pragmatic one.

The trillion-dollar question now is whether Apple plans to stay dependent on Google long term or whether this billion-dollar-a-year arrangement is buying time while Apple builds something of its own. Apple's engineers are already working on what they are calling Apple Foundation Models version 11 for iOS 27, which is described as significantly more capable than the current Gemini-powered version.

The race is not over. It just changed shape.

In the meantime, go update your iPhone. For the first time in a long time, that update might actually be worth getting excited about.

— Roo